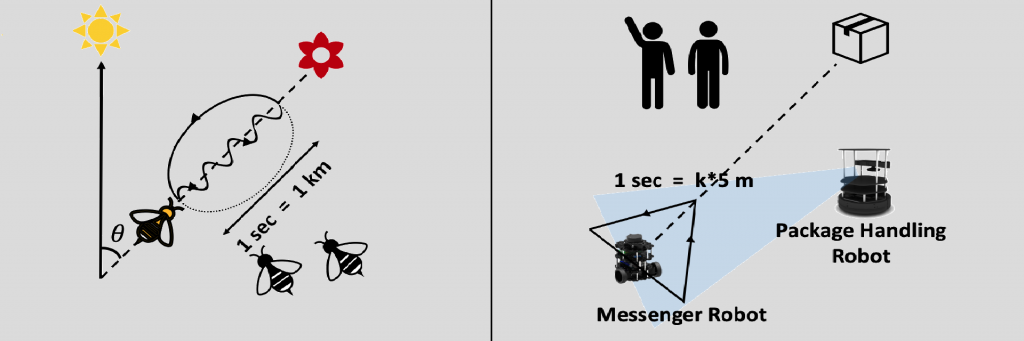

(Image courtesy: Abhra Roy Chowdhury)

Have you ever had to navigate a noisy room in search of a friend? Your eyes scour the room in the hope of catching sight of them, so that you can walk quickly in that direction. Now picture the same thing, but with robots instead. In recent work by Abhra Roy Chowdhury at the Centre for Product Design and Manufacturing, IISc, and Kaustubh Joshi at the University of Maryland, robots can now use visual cues, instead of network-based communication, to not only navigate but also deliver a package, with the aid of a human.

A human first signals a messenger robot via hand gestures about the destination to which a package must be delivered. The messenger robot then signals a package handling robot, by moving along paths in specific geometric shapes, such as a triangle, circle or a square, to communicate the direction and distance towards this destination. This is inspired by the ‘waggle dance’ that bees use to communicate with one another. The robots use an object detection algorithm and depth perception to detect and react to the gestures.

Such interactive robots can be deployed for search and rescue operations, package delivery, and in industrial settings where multiple robots talk to one another.